By Ismael Peña-López (@ictlogist), 31 August 2007

Main categories: e-Readiness, ICT4D, Meetings

Other tags: ict4d_symposium_2007, phd

No Comments »

Ismael Peña-López

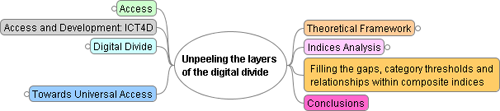

Unpeeling the layers of the digital divide: category thresholds and relationships within composite indices

The goal of this research is to add reflection and knowledge to the belief that there is an important lack of tools to measure the development of the Information Society, specially addressed to policy makers aiming to foster digital development. We believe there is still an unexplored point of view in measuring the Information Society which goes from inside-out instead of outside-in. In other words, the main indices and/or reports focus either in technology penetration or in the general snapshot of the Information Society “as is”. There is, notwithstanding, a third approach that would deal with working only with digital-related indicators and indices, thus including some aspects not taken into account by the technology penetration approach (i.e. informational literacy), and putting aside some “real economy” or “analogue society” indicators not strictly related to the digital paradigm. Relationships between subindices would also provide interesting insight for policy makers on which to ground the design of their initiatives.

Presentation as a mental map:

Unpeeling the layers of the digital divide

[click to enlarge]

Working bibliography

Some comments/suggestions I got:

- The importance of context when designing indices and how this context might challenge the accuracy, objectivity, suitability of these indices

- The importance, beyond infrastructures, of international services broadcasters such as BBC or Voice America

- Analyzing what the (real) goal, purpose of the indices is. How and, over all, why are they built the way they are

- The importance of not only looking at what worked (e.g. projects and policies to foster the information society), but also what has failed, what and how many are the failed projects

- The opportunity to develop different indexes according to the different countries, contexts, etc.

- context, context, context

Florence Nameere Kivunike

Measuring the Information Society: An Explorative Study of Existing Tools

Why assess the Information society

- Current status

- Comparison

- Tracking progress

- Policy, decision-making

- Research-related

- Value-judgment

What is assessed

- Social

- Economic

- Institutional

Temporal concept

- Readiness

- Intensity

- Impact

Limitations

- Focusing mainly on infrastrucutre

- Relationships among features

- Limited consideration of context in terms of the enabling factors e.g. social or cultural setup and flexibility

- Oversimplified methods characterized by subjective approaches

Challenges of IS Assessment

- Dynamic and complex nature of the IS

- Data constraints especially in development countries

- Challenge of measuring transformation

More info

- Menou, M. & Taylor, R. (2006) A “Grand Challenge”: Measuring Information Societies. 22, The Information Society p 261-267

Second Annual ICT4D Postgraduate Symposium (2007)

By Ismael Peña-López (@ictlogist), 24 July 2007

Main categories: Digital Divide, Digital Literacy, e-Readiness, ICT4D, Meetings

Other tags: phd, sdp2007

No Comments »

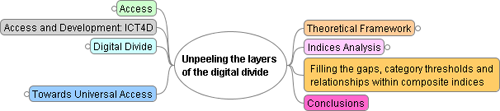

The goal of this research is to add reflection and knowledge to the belief that there is an important lack of tools to measure the development of the Information Society, specially addressed to policy makers aiming to foster digital development. We believe there is still an unexplored point of view in measuring the Information Society which goes from inside-out instead of outside-in. In other words, the main indices and/or reports focus either in technology penetration or in the general snapshot of the Information Society “as is”. There is, notwithstanding, a third approach that would deal with working only with digital-related indicators and indices, thus including some aspects not taken into account by the technology penetration approach (i.e. informational literacy), and putting aside some “real economy” or “analogue society” indicators not strictly related to the digital paradigm. Relationships between subindices would also provide interesting insight for policy makers on which to ground the design of their initiatives.

Michael Best comments that it’ll be interesting to test too the impact of the indices that measure the information society on policy makers and the policies they make up to foster the information society. I guess that maybe the way to do this would be to compare the series of an e-readiness indicator and the series of regulations issued during the same period of time in a country.

More info

SDP 2007 related posts (2007)

By Ismael Peña-López (@ictlogist), 15 September 2006

Main categories: ICT4D, Meetings

Other tags: ict4d_symposium_2006, phd

1 Comment »

This is the Annual ICT4D Postgraduate Symposium fifth session briefing. It took place in Egham, September 15th, 2006, morning. Here come the notes I took on the fly:

Auchariya Yongphrayoon, Royal Holloway University of London

Geographical Information Systems for Mass Valuation in Thailand

Compare econometric methods of valuation, comparing the benefits of modern technology and methodology with the traditional valuation method in terms of relability and accuracy.

The hedonic pricing method places emphasis on property attributes and price per unit value.

The question is: are there any more attributes affecting the prices of lands in the study areas [besides the ones considered]?

Auchariya Yongphrayoon

Ismael Peña-López, Open University of Catalonia

The e-readiness layers: thresholds and relationships

Build an only digital index, different from the technology focused ones (i.e. ITU) or the ones that combine ICT indicators and development indicators (i.e. NRI).

The goal is try and stablish relationships among indicators (not just an index) so the conclusions can be used in ICT policy making.

Julie E. Ferguson, Royal Holloway University of London

Anylizing the development effect of knowledge sharing strategies

[disclaimer: she’s the chief editor of Knowledge Management for Development Journal]

She explains the three main methods to measure the effect of knowledge sharing for development and, with the conclusions, work in the making of a (new) framework of analyse, taking the best of the three worlds (and own contribution ;) and being capable of doing impact assessment.

The main goal is go ahead the case-study or the particular-approach and try and set a general way of evaluating knowledge strategies. To do so, fieldwork in Uganda putting in practice the three methods, in order to be able to compare them.

The $1M questions: what is knowledge? what is effect? what is impact?

Julie E. Ferguson (speaking) and David Hollow (behind left)

First Annual ICT4D Postgraduate Symposium (2006)

The e-readiness layers: thresholds and relationships

The e-readiness layers: thresholds and relationships